Both Literature Reviews and Systematic Reviews Are Types of Research Evidence Reviews

- Review

- Open Admission

- Published:

What are the all-time methodologies for rapid reviews of the research evidence for evidence-informed decision making in health policy and practise: a rapid review

Health Research Policy and Systems volume 14, Article number:83 (2016) Cite this article

Abstruse

Groundwork

Rapid reviews have the potential to overcome a central barrier to the use of enquiry evidence in determination making, namely that of the lack of timely and relevant research. This rapid review of systematic reviews and primary studies sought to respond the question: What are the best methodologies to enable a rapid review of research evidence for evidence-informed conclusion making in health policy and practice?

Methods

This rapid review utilised systematic review methods and was conducted according to a pre-defined protocol including clear inclusion criteria (PROSPERO registration: CRD42015015998). A comprehensive search strategy was used, including published and grey literature, written in English, French, Portuguese or Spanish, from 2004 onwards. Eleven databases and two websites were searched. Two review authors independently applied the eligibility criteria. Data extraction was done past ane reviewer and checked by a 2nd. The methodological quality of included studies was assessed independently by two reviewers. A narrative summary of the results is presented.

Results

Five systematic reviews and i randomised controlled trial (RCT) that investigated methodologies for rapid reviews met the inclusion criteria. None of the systematic reviews were of sufficient quality to allow firm conclusions to be fabricated. Thus, the findings need to exist treated with caution. In that location is no agreed definition of rapid reviews in the literature and no agreed methodology for conducting rapid reviews. While a wide range of 'shortcuts' are used to make rapid reviews faster than a full systematic review, the included studies found piffling empirical evidence of their bear on on the conclusions of either rapid or systematic reviews. In that location is some testify from the included RCT (that had a low take chances of bias) that rapid reviews may improve clarity and accessibility of enquiry evidence for decision makers.

Conclusions

Greater care needs to be taken in improving the transparency of the methods used in rapid review products. There is no evidence available to suggest that rapid reviews should not exist done or that they are misleading in whatever way. Nosotros offer an improved definition of rapid reviews to guide hereafter enquiry as well equally clearer guidance for policy and exercise.

Background

In May 2005, the Globe Health Associates called on WHO Fellow member States to "constitute or strengthen mechanisms to transfer knowledge in support of evidence-based public wellness and healthcare delivery systems, and show-based health-related policies" [1]. Knowledge translation has been defined by WHO as: "the synthesis, substitution, and application of knowledge by relevant stakeholders to accelerate the benefits of global and local innovation in strengthening health systems and improving people'southward health" [2]. Cognition translation seeks to address the challenges to the use of scientific evidence in order to close the gap between the evidence generated and decisions being made.

To achieve improve translation of noesis from research into policy and practice it is important to be aware of the barriers and facilitators that influence the use of inquiry evidence in health policy and practice determination making [iii–eight]. The nearly ofttimes reported barriers to show uptake are poor access to expert quality relevant research and lack of timely and relevant research output [7, ix]. The most frequently reported facilitators are collaboration between researchers and policymakers, improved relationships and skills [seven], and research that accords with the beliefs, values, interests or practical goals and strategies of decision makers [x].

In relation to admission to good quality relevant research, systematic reviews are considered the gold standard and these are used as a basis for products such as practice guidelines, health technology assessments, and prove briefs for policy [11–xiv]. However, at that place is a growing demand to provide these evidence products faster and with the needs of the decision-maker in listen, while also maintaining brownie and technical quality. This should assistance to overcome the barrier of lack of timely and relevant enquiry, thereby facilitating their use in conclusion making. With this in mind, a range of methods for rapid reviews of the enquiry evidence have been developed and put into practise [15–18]. These frequently include modifications to systematic review methods to make them faster than a total systematic review. Some examples of modifications that have been made include (1) a more targeted enquiry question/reduced scope; (ii) a reduced list of sources searched, including limiting these to specialised sources (e.g. of systematic reviews, economical evaluations); (3) articles searched in the English language linguistic communication only; (4) reduced timeframe of search; (5) exclusion of grey literature; (7) use of search tools that make it easier to find literature; and (7) use of only 1 reviewer for study option and/or data extraction. Given the emergence of this approach, it is important to develop a noesis base regarding the implications of such 'shortcuts' on the strength of evidence being delivered to conclusion makers. At the time of conducting this review, nosotros were non aware of whatsoever loftier quality systematic reviews on rapid reviews and their methods.

It is important to notation that a range of terms have been used to describe rapid reviews of the research bear witness, including evidence summaries, rapid reviews, rapid syntheses, and brief reviews, with no clear definitions [15, 16, 18–22]. In this paper, nosotros accept used the term 'rapid review', despite starting with the term 'rapid evidence synthesis' in our protocol, every bit it became clear during the conduct of our review that it is the nigh widely used term in the literature [23]. We consider a broad range of rapid reviews, including rapid reviews of effectiveness, problem definition, aetiology, diagnostic tests, and reviews of cost and toll-effectiveness.

The rapid review presented in this article is part of a larger projection aimed at designing a rapid response programme to support prove-informed decision making in health policy and do [24]. The expectation is that a rapid response will facilitate the utilize of research for decision making. We accept labelled this study every bit a rapid review because it was conducted in a limited timeframe and with the needs of health policy conclusion-makers in listen. It was deputed by policy decision-makers for their immediate apply.

The objective of this rapid review was to apply the best available bear witness to answer the following research question: What are the best methodologies to enable a rapid review of research evidence for evidence-informed decision making in health policy and do? Both systematic reviews and primary studies were included. Notation that nosotros have deliberately used the term 'all-time methodologies' as information technology is likely that a variety of methods volition be needed depending on the research question, timeframe and needs of the decision maker.

Methods

This rapid review used systematic review methodology and adheres to the Preferred Reporting Items for Systematic Reviews and Meta-Analysis argument [25]. A systematic review protocol was written and registered prior to undertaking the searches [26]. Deviations from the protocol are listed in Additional file 1.

Inclusion criteria for studies

Studies were selected based on the inclusion criteria stated below.

Types of studies

Both systematic reviews and principal studies were sought. For inclusion, priority was given to systematic reviews and to primary studies that used one of the post-obit designs: (1) individual or cluster randomised controlled trials (RCTs) and quasi-randomised controlled trials; (ii) controlled before and after studies where participants are allocated to command and intervention groups using not-randomised methods; (iii) interrupted time series with before and afterward measurements (and preferably with at least three measures); and (4) cost-effectiveness/cost-utility/toll-benefit. Other types of studies were also identified for consideration for inclusion in case no systematic reviews and few primary studies with strong study designs (equally indicated above in one–4) could be found. They were initially selected provided that they described some blazon of evaluation of methodologies for rapid reviews.

Types of participants

Apart from needing to be within the field of health policy and practice, the types of participants were non restricted, and the level of analysis could be at the level of the private, organisation, system or geographical area. During the study option process, we made a decision to also include 'products', i.e. papers that include rapid reviews as the unit of inclusion rather than people.

Types of articles/interventions

Studies that evaluated methodologies or approaches to rapid reviews for wellness policy and/or practice, including systematic reviews, do guidelines, engineering assessments, and evidence briefs for policy, were included.

Types of comparisons

Suitable comparisons (where relevant to the article blazon) included no intervention, another intervention, or current practise.

Types of outcome measures

Relevant outcome measures included time to complete; resource required to complete (e.thousand. cost, personnel); measures of synthesis quality; measures of efficiency of methods (measures that combine aspects of quality with time to complete, due east.thousand. limiting data extraction to key characteristics and results that may reduce the time needed to consummate without impacting on review quality); satisfaction with methods and products; and implementation. During the study selection process the authors agreed to include two boosted outcomes that were not in the published protocol but of import for the review, namely comparison of findings between the dissimilar synthesis methods (e.g. rapid vs. systematic review) and price-effectiveness.

Publications in English, French, Portuguese or Castilian, from any country and published from 2004 onwards were included. The year 2004 was called as this is the year of the United mexican states Ministerial Summit on Health Research, where the know-do gap was first given serious attention by health ministers [27]. Both gray and peer-reviewed literature was sought and included.

Search methods for identification of studies

A comprehensive search of eleven databases and two websites was conducted. The databases searched were CINAHL, the Cochrane Library (including Cochrane Reviews, the Database of Abstracts of Reviews of Effectiveness, the Health Technology Assessment database, NHS Economic Evaluation Database, and the database of Methods Studies), EconLit, EMBASE, Health Systems Evidence, LILACS and Medline. The websites searched were Google and Google Scholar.

Grey literature and manual search

Some of the selected databases index a combination of published and unpublished studies (for example, doctoral dissertations, conference abstracts and unpublished reports); therefore, unpublished studies were partially captured through the electronic search process. In improver, Google and Google Scholar were searched. The authors' own databases of noesis translation literature were also searched by hand for relevant studies. The reference list of each included study was searched. Contact was made with nine cardinal authors and experts in the area for further studies, of whom five responded.

Search strategy

Searches were conducted between 15th January and 3rd February 2015 and supplementary searches (reference lists, contact with authors) were conducted in May 2015. Databases were searched using the keywords: "rapid literature review*" OR "rapid systematic review*" or "rapid scoping review*" OR "rapid review*" OR "rapid approach*" OR "rapid synthesis" OR "rapid syntheses" OR "rapid evidence assess*" OR "prove summar*" OR "realist review*" OR "realist synthesis" OR "realist syntheses" OR "realist evaluation" OR "meta-method*" OR "meta method*" OR "realist arroyo*" OR "meta-evaluation*" OR "meta evaluation*". Keywords were searched for in title and abstract, except where otherwise stated in Additional file 2. Results were downloaded into the EndNote reference direction plan (version X7) and duplicates removed. The Internet search utilised the search terms: "rapid review"; "rapid systematic review"; "realist review"; "rapid synthesis"; and "rapid testify".

Screening and selection of studies

Searches were conducted and screened according to the selection criteria by 1 review author (MH). The full text of any potentially relevant papers was retrieved for closer test. This reviewer erred on the side of inclusion where at that place was any doubt virtually its inclusion to ensure no potentially relevant papers were missed. The inclusion criteria were then applied against the full text version of the papers (where available) independently by two reviewers (MH and RC). For studies in Portuguese and Castilian, other authors (EC, LR or JB) played the role of second reviewer. Disagreements regarding eligibility of studies were resolved past give-and-take and consensus. Where the 2 reviewers were all the same uncertain most inclusion, the other reviewers (EC, LR, JB, JL) were asked to provide input to reach consensus. All studies which initially appeared to meet the inclusion criteria, but on inspection of the total text newspaper did non, were detailed in a table 'Characteristics of excluded systematic reviews,' together with reasons for their exclusion.

Application of the inclusion criteria by the two reviewers was performed every bit follows. Outset, all studies that met the inclusion criteria for participants, interventions and outcomes were selected, providing that they described some type of evaluation of methodologies for rapid evidence synthesis. At this stage, the written report type was assessed and categorised past the two reviewers as being a (one) systematic review; (ii) primary study with a stiff written report pattern, i.e. of one of the four types identified above; or (3) 'other' study pattern (that provided some type of evaluation of methodologies for rapid evidence synthesis). The reason for this was to enable the reviewers to brand a decision equally to which study designs should be included (based on bachelor prove, it was not known if sufficient bear witness would be establish if simply systematic reviews and primary studies with potent written report designs were included from the outset) and because of interest from the funders in other study types. Following discussion between all co-authors information technology was decided that it was likely that sufficient evidence could exist provided from the first 2 categories of study type. Thus, the third group was excluded from data extraction but are listed in Additional file 3.

Data extraction

Information extracted from studies and reviewed included objectives, target population, method/s tested, outcomes reported, country of study/studies and results. For systematic reviews we also extracted the date of last search, the included report designs and the number of studies. For primary studies, nosotros also extracted the year of study, the study design and the population size. Information extraction was performed by i reviewer (MH) and checked by a 2nd reviewer (RC). Disagreements were resolved through word and consensus.

Assessment of methodological quality

The methodological quality of included studies was assessed independently past two reviewers using AMSTAR: A MeaSurement Tool to Assess Reviews [28] for systematic reviews and the Cochrane Risk of Bias Tool for RCTs [29]. Disagreements in scoring were resolved by discussion and consensus. For this review, systematic reviews that achieved AMSTAR scores of eight to 11 were considered high quality; scores of four to vii medium quality; and scores of 0 to three low quality. These cut-offs are commonly used in Cochrane Collaboration overviews. The study quality assessment was used to interpret their results when synthesised in this review and in the formulation of conclusions.

Data analysis

Findings from the included publications were synthesised using tables and a narrative summary. Meta-assay was non possible because the included studies were heterogeneous in terms of the populations, methods and outcomes tested.

Results

Search results

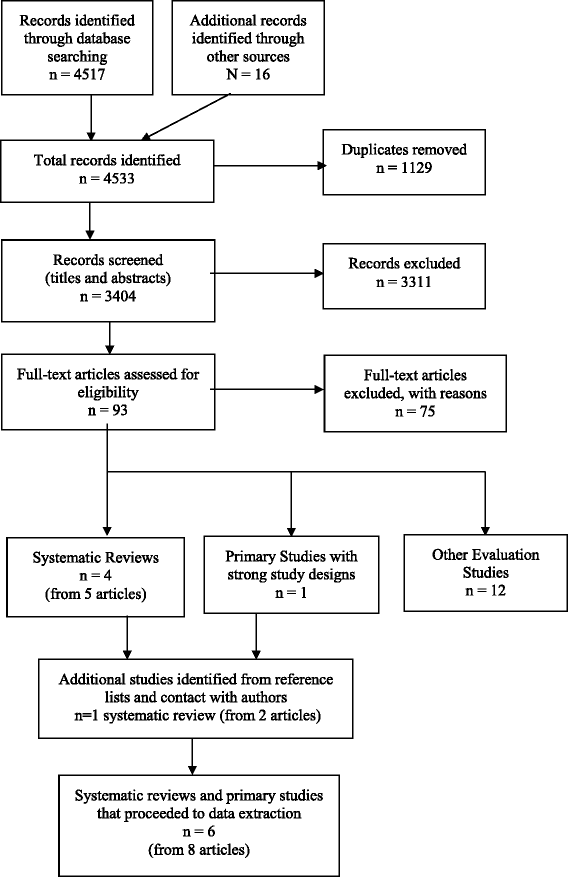

V systematic reviews (from seven articles) [18, nineteen, 21, 30–33] and 1 primary study with a stiff study design – a RCT [34] – met the inclusion criteria for the review. The pick process for studies and the numbers at each stage are shown in Fig. 1. The reasons for exclusion of the 75 papers at total text stage are shown in Additional file 3. The 12 evaluation studies excluded from information extraction due to weak study designs are also listed at the cease of Boosted file 3.

Study selection flow nautical chart – Methods for rapid reviews

Characteristics of included studies and quality cess

Characteristics of the included systematic reviews are summarised in Tabular array one, with full details provided in Additional file 4. All rapid reviews were targeted at healthcare determination makers and/or agencies conducting rapid reviews (including rapid health applied science assessments). Merely two of the systematic reviews offered a definition of "rapid review" to guide their reviews [19, 30, 33]. Three of the systematic reviews obtained samples of rapid review products – though non necessarily randomly – and examined aspects of the methods used in their production [18, 19, 21]. Three of the systematic reviews reviewed articles on rapid review methods [18, xxx, 32]. Ii of these also included a comparison of findings from rapid reviews and systematic reviews conducted for the same topic [18, 32].

None of the systematic reviews that were identified examined the outcomes of resources required to complete, synthesis quality, efficiency of methods, satisfaction with methods and products, implementation, or price-effectiveness. However, while not explicitly assessing synthesis/review quality, all of the reviews did report the methods used to conduct the rapid reviews. We have reported these details as they give an indication of the quality of the review. Therefore, the outcomes reported in the included systematic reviews and recorded in Table 1 and Additional file 4 practice not marshal perfectly with those proposed in our inclusion criteria. In improver, nosotros have included some data that was not pre-defined but for which nosotros extracted information because it provided of import contextual information, e.g. type of product, definition, rapid review initiation and rationale, nomenclature, and content. The reporting of the results was also further complicated past the apply of a narrative, rather than a quantitative, synthesis of the results in the included studies.

It is not possible to say how many unique studies are included in these systematic reviews considering only one review actually included a list of included studies [xxx] and 1 a characteristics of included studies table (simply not in a form that was easy to use) [21]. However, information technology is articulate that there is likely to be significant overlap in studies betwixt reviews. For example, the almost recent systematic review by Hartling et al. [31, 32] also included the four previous systematic reviews included in this rapid review [18, 19, 21, xxx, 33].

The RCT was targeted at healthcare professionals involved in clinical guideline evolution [34]. It aimed to assess the effectiveness of different evidence summary formats for use in clinical guideline development. Iii different packs were tested – pack A: a systematic review alone; pack B: a systematic review with summary-of-findings tables included; and pack C: an evidence synthesis and systematic review. Pack C is described past the authors of the study as: "a locally prepared, short, contextually framed, narrative report in which the results of the systematic review (and other testify where relevant) were described and locally relevant factors that could influence the implementation of evidence-based guideline recommendations (e.g. resources capacity) were highlighted" [34]. We interpreted pack C every bit being a 'rapid review' for the purposes of this review as the authors state that it is based on a comprehensive search and critical appraisal of the best currently bachelor literature, which included a Cochrane review, an overview of systematic reviews and RCTs, and boosted RCTs but was likely to accept been done in a brusk timeframe. It was also conducted to assistance improve determination-making. The primary issue measured was the proportion of right responses to key clinical questions, whilst the secondary outcome was a composite score comprised of clarity of presentation and ease of locating the quality of show [34]. This study was not included in any previous systematic reviews.

4 of the systematic reviews obtained AMSTAR scores of 2 (low quality) and one a score of 4 (medium quality). No high quality systematic reviews were found. Thus, the findings of the systematic reviews should be taken as indicative merely and no business firm conclusions can be made. The RCT was classified as low risk of bias on the Cochrane Risk of Bias tool. The quality assessments can be found in Boosted file five.

Findings

Definition of a 'rapid review'

The v systematic reviews are consistent in stating that there is no agreed definition of rapid reviews and no agreed methodology for conducting rapid reviews [xviii, 19, 21, xxx–33]. Co-ordinate to the authors of 1 review: "the term 'rapid review' does non appear to take one single definition but is framed in the literature as utilizing various stipulated time frames between 1 and 6 months" [21, p. 398]. The definitions offered to guide the reviews by Abrami et al. [xix] and Cameron et al. [30] both apply a timeframe of upwardly to 6 months (Tabular array 1). Cameron et al. [30] too include in their definition the requirement that the review contains the elements of a comprehensive search – though they do not offer criteria to assess this.

Abrami et al. [19] use the term 'cursory review' rather than 'rapid review' to emphasise that both timeframe and scope may be afflicted. They write that "a brief review is an examination of empirical prove that is limited in its timeframe (e.thou. 6 months or less to complete) and/or its scope, where scope may include:

-

the breadth of the question being explored (east.g. a review of i-to-one laptop programs versus a review of technology integration in schools);

-

the timeliness of the show included (e.g. the last several years of research versus no time limits);

-

the geographic boundaries of the evidence (e.1000. inclusion of regional or national studies only versus international show);

-

the depth and detail of analyses (east.yard. reporting only overall findings versus also exploring variability among the findings); or

-

otherwise more than restrictive study inclusion criteria than might be seen in a comprehensive review." [xix, p. 372].

All other included systematic reviews used the term 'rapid review' or 'rapid health technology assessment' to describe rapid reviews.

Methods used based on examples of rapid reviews

While the give-and-take 'rapid' indicates that it will be carried out quickly, in that location is no consistency in published rapid reviews as to how it is made rapid and which role, or parts, of the review are carried out at a faster footstep than a full systematic review [xviii, xix, 21]. A farther complexity is the reporting of methods used in the rapid review, with about 43% of the rapid reviews examined by Abrami et al. [nineteen] not describing their methodology comprehensively. Three examples of 'shortcuts' taken are (1) not using two reviewers for report pick and/or data extraction; (2) non conducting a quality cess of included studies; and (3) not searching for grey literature [18, 19, 21]. However, it is important to note that the rapid reviews examined in these three systematic reviews were not necessarily selected randomly and, thus, it is not possible to accurately quantify the proportion of rapid reviews taking various 'shortcuts' and which 'shortcuts' are the near common. The fourth dimension taken for the reviews examined varied from several days to 1 twelvemonth [19]; 3 weeks to 6 months [18]; and vii–12 (mean 10.42, SD 7.1) months [21].

Methods used based on studies of rapid review methods

Methodological approaches or 'shortcuts' used in rapid reviews to brand them faster than a full systematic review include [xviii, 19, 32] limiting the number of questions; limiting the scope of questions; searching fewer databases; limited use of grey literature; restricting the types of studies included (e.chiliad. English simply, well-nigh contempo 5 years); relying on existing systematic reviews; eliminating or limiting manus searching of reference lists and relevant journals; narrow fourth dimension frame for article retrieval; using not-iterative search strategy; eliminating consultation with experts; limiting full-text review; limiting dual review for report selection, data extraction and/or quality cess; limiting information extraction; limiting take a chance of bias assessment or grading; minimal show synthesis; providing minimal conclusions or recommendations; and limiting external peer review. Harker et al. [21] constitute that, with increasing timeframes, fewer of the 'shortcuts' were used and that, with longer timeframes, information technology was more likely that risk of bias assessment, evidence grading and external peer review would be conducted [21].

None of the included systematic reviews offer business firm guidelines for the methodology underpinning rapid reviews. Rather, they report that many manufactures written near rapid reviews offer simply examples and discussion surrounding the complexity of the area [30].

Supporting evidence for shortcuts

While authors of the included systematic reviews tend to agree that changes to scope or timeframe can innovate biases (e.g. selection bias, publication bias, language of publication bias) they found little empirical testify to support or refute that claim [18, 19, 21, 30, 32].

The review by Ganann et al. [18] included 45 methodological studies that considered issues such equally the impact of limiting the number of databases searched, paw searching of reference lists and relevant journals, omitting grey literature, only including studies published in English, and omitting quality assessment. However, they were unable to provide clear methodological guidelines based on the findings of these studies.

Comparing of findings – rapid reviews versus systematic reviews

A key question is whether the conclusions of a rapid review are fundamentally unlike to a full systematic review, i.e. whether they are sufficiently different to change the resulting decision. This is an expanse where the research is extremely express. There are few comparisons of total and rapid reviews that are available in the literature to be able to determine the impact of the higher up methodological changes – just two main studies were reported in the included systematic reviews [35, 36]. It is important to note that neither of these studies met, on their own, the inclusion criteria for the review in that they did not have a sufficiently strong study blueprint. Both are included in the list of 12 studies excluded from data extraction (Fig. one and Additional file 3). Thus, they provide a very low level of show.

One of the chief studies compared full and rapid reviews on the topics of drug eluting stents, lung volume reduction surgery, living donor liver transplantation and hip resurfacing [xxx, 36]. At that place were no instances in which the essential conclusions of the rapid and total reviews were opposed [32]. The other compared a rapid review with a total systematic review on the utilise of potato peels for burns [35]. The results and conclusions of the two reports were dissimilar. The authors of the rapid review suggest that this is because the systematic review was not of sufficiently good quality – as they missed two of import trials in their search [35]. However, the limited item on the methods used to conduct the systematic review makes this case study of limited value. Farther research is needed in this area.

Bear upon of rapid syntheses on understanding of decision makers

The included RCT past Opiyo et al. [34] examined the impact of different show summary or synthesis formats on knowledge of the show, with each participant receiving a pack containing iii different summaries; they establish no differences between packs in the odds of correct responses to primal clinical questions. Pack C (the rapid review) was associated with a higher hateful blended score for clarity and accessibility of information about the quality of evidence for critical neonatal outcomes compared to systematic reviews lonely (pack A) (adjusted mean divergence 0.52, 95% confidence interval, 0.06–0.99). Findings from interviews with 16 panellists indicated that brusque narrative prove reports (pack C) were preferred for the improved clarity of information presentation and ease of apply. The authors ended that their "findings suggest that 'graded-entry' evidence summary formats may improve clarity and accessibility of research evidence in clinical guideline development" [34, p. 1].

Word

This review is the starting time loftier quality review (using systematic reviews every bit the gold standard for literature reviews) published in the literature that provides a comprehensive overview of the state of the rapid review literature. Information technology highlights the lack of definition, lack of defined methods and lack of inquiry evidence showing the implications of methodological choices on the results of both rapid reviews and systematic reviews. It besides adds to the literature by offer clearer guidance for policy and practice than has been offered in previous reviews (see Implications for policy and practice).

While v systematic reviews of methods for rapid reviews were found, none of these were of sufficient quality to allow house conclusions to be made. Thus, the findings need to be treated with circumspection. There is no agreed definition of rapid reviews in the literature and no agreed methodology for conducting rapid reviews [eighteen, 19, 21, 30–33]. Nonetheless, the systematic reviews included in this review are consequent in stating that a rapid review is generally conducted in a shorter timeframe and may have a reduced telescopic. A wide range of 'shortcuts' are used to brand rapid reviews faster than a full systematic review. While authors of the included systematic reviews tend to agree that changes to scope or timeframe can innovate biases (e.1000. choice bias, publication bias, linguistic communication of publication bias) they found picayune empirical testify to support or abnegate that claim [18, 19, 21, thirty, 32]. Further, at that place are few comparisons available in the literature of full and rapid reviews to be able to make up one's mind the affect of these 'shortcuts'. At that place is some testify from a good quality RCT with low risk of bias that rapid reviews may amend clarity and accessibility of enquiry evidence for decision makers [34], which is a unique finding from our review.

A scoping review published afterwards our search institute over 20 different names for rapid reviews, with the well-nigh frequent term being 'rapid review', followed past 'rapid prove cess' and 'rapid systematic review' [23]. An associated international survey of rapid review producers and modified Delphi arroyo counted 31 different names [37]. With regards to rapid review methods and definitions, the scoping review plant 50 unique methods, with sixteen methods occurring more than than once [23]. For their scoping review and international survey, Tricco et al. utilised the working definition: "a rapid review is a type of knowledge synthesis in which components of the systematic review procedure are simplified or omitted to produce information in a short period of time" [23, 37].

The authors of the near recent systematic review of rapid review methods suggest that: "the similarity of rapid products lies in their shut relationship with the end-user to run into decision making needs in a limited timeframe" [32, p. seven]. They suggest that this feature drives other differences, including the large range of products often produced by rapid response groups, and the wide variation in methods used [32] – even within the aforementioned product type produced by the aforementioned grouping. We suggest that this feature of rapid reviews needs to be office of the definition and considered in time to come research on rapid reviews, including whether it really leads to improve uptake of research. To aid hereafter research, we advise the following definition: a rapid review is a type of systematic review in which components of the systematic review process are simplified, omitted or made more efficient in order to produce information in a shorter menstruum of time, preferably with minimal touch on on quality. Farther, they involve a close relationship with the end-user and are conducted with the needs of the conclusion-maker in mind.

When comparing rapid reviews to systematic reviews, the confounding effects of quality of the methods used must be considered. If rapid syntheses of research are seen equally systematic reviews performed faster and if systematic reviews are seen as the gold standard for testify synthesis, the quality of the review is likely to depend on which 'shortcuts' were taken and this can be assessed using bachelor quality measures, e.yard. AMSTAR [28]. While Cochrane Collaboration systematic reviews are consistently of a very high quality (achieving 10 or eleven on the AMSTAR scale, based on our own experience) the aforementioned cannot be said for all systematic reviews that tin exist found in the published literature or in databases of systematic reviews – as is demonstrated by this review where AMSTAR scores were quite low (Additional file 5) and a related overview where AMSTAR scores varied between two and ten [24, Additional file i]. This fact has non been best-selling in previous syntheses of the rapid review literature. It is also possible for rapid reviews to achieve high AMSTAR scores if conducted and reported well. Therefore, it tin can be easily argued that a high quality rapid review is likely to provide an answer closer to the 'truth' than a systematic review of low quality. It is also an argument for using the same tool for assessing the quality of both systematic and rapid reviews.

Authors of the published systematic reviews of rapid reviews suggest that, rather than focusing on developing a formalised methodology, which may non be advisable, researchers and users should focus on increasing the transparency of the methods used for each review [18, thirty, 33]. Indeed, several AMSTAR criteria are highly dependent on the transparency of the write-up rather than the methodology itself. For instance, there are many examples of both systematic and rapid review authors non stating that they used a protocol for their review when, in fact, they did apply 1, leading to a loss of 1 point on the AMSTAR scale. Another case is review authors failing to provide an adequate clarification of the study selection and data extraction process, thus making it hard for those assessing the quality of the review to determine if this was washed in duplicate, which is once again a loss of one signal on the AMSTAR scale.

While it could be argued that none of the included reviews described their review equally a systematic review, nosotros believe that it is advisable to assess their quality using the AMSTAR tool. This the best tool available, to our knowledge, to assess and compare the quality of review methods and considers the major potential sources for bias in reviews of the literature [28, 38]. Further, the v reviews included were clearly not narrative reviews as each described their methods, including sources of studies, search terms and inclusion criteria used.

Strengths and limitations

A primal strength of this rapid review is the use of high quality systematic review methodology, including the consideration of the scientific quality of the included studies in formulating conclusions. A meta-analysis was non possible due to the heterogeneity in terms of intervention types and populations studied in the included systematic reviews. As a result publication bias could non be assessed quantitatively in this review and no clear methods are available for assessing publication bias qualitatively [39]. Shortcuts taken to make this review more rapid, as well every bit an AMSTAR assessment of the review, are shown in Additional file 6. The AMSTAR assessment is based on the published tool [28] and additional guidance provided on the AMSTAR website (http://amstar.ca/Amstar_Checklist.php).

The current rapid review is evidence that a review can include several shortcuts and be produced in a relatively curt corporeality of time without sacrificing quality, as shown by the high AMSTAR score (Boosted file half dozen). The fourth dimension taken to complete this review was 7 months from signing of contract (Nov 2014) to submission of the final report to the funder (June 2015). Alternatively, if publication of the protocol on PROSPERO and the first of literature searching (January 2014) are taken as the starting point, the fourth dimension taken was five months.

Limitations of this review include (1) the low quality of the systematic reviews found, with three of the 4 included systematic reviews judged as low quality on the AMSTAR criteria and the fourth just making it to medium quality (Boosted file 5); (ii) the fact that few primary studies were conducted in developing countries, which is an issue for the generalisability of the results; and (three) restricting the search to articles in English language, French, Spanish or Portuguese (languages with which the review authors are competent) and to the concluding x years. However, this was done to expedite the review procedure and is unlikely to have resulted in the loss of important evidence.

Implications for policy and practice

Users of rapid reviews should asking an AMSTAR rating and a articulate indication of the shortcuts taken to brand the review process faster. Producers of rapid reviews should give greater consideration to the 'write-up' or presentation of their reviews to make their review methods more transparent and to enable a fair quality assessment. This could be facilitated by including the appropriate elements in templates and/or guidelines. If a shorter report is required, the necessary detail could be placed in appendices.

When deciding what methods and/or process to utilize for their rapid reviews, producers of rapid reviews should give priority to shortcuts that are unlikely to impact on the quality or adventure of bias of the review. Examples include limiting the scope of the review [nineteen], limiting data extraction to cardinal characteristics and results [32], and restricting the report types included in the review [32]. When planning the rapid review, the review producer should explain to the user the implications of any shortcuts taken to make the review faster, if any.

Producers of rapid reviews should consider maintaining a larger highly skilled and experienced staff, who tin can exist mobilised quickly, and understands the blazon of products that might meet the needs of the decision maker [nineteen, 32]. Consideration should besides be given to making the procedure more efficient [19]. These measures can aid timelines without compromising quality.

Implications for research

The impact on the results of rapid reviews (and systematic reviews) of any 'shortcuts' used requires further enquiry, including which 'shortcuts' accept the greatest impact on the review findings. Tricco et al. [23, 37] suggest that this could be examined through a prospective study that compares the results of rapid reviews to those obtained through systematic reviews on the aforementioned topic. However, to do this, it will be important to consider quality equally a misreckoning factor and ensure random selection and blinding of the rapid review producers. If random selection and blinding cannot be guaranteed, we suggest that retrospective comparisons may be more appropriate. Another, related approach, would be to compare findings of reviews (exist they systematic or rapid) for each type of shortcut, controlling for methodological quality. Other issues, such equally the breadth of the inclusion criteria used and number of studies included would also demand to be considered as possible confounding factors.

The development of reporting guidelines for rapid reviews, as are bachelor for total systematic reviews, would also assist [18, 25]. These should exist heavily based on systematic review guidelines simply also consider characteristics specific to rapid reviews such every bit the relationship with the review user.

Finally, time to come studies and reviews should as well accost the outcomes of review quality, satisfaction with methods and products, implementation and cost-effectiveness as these outcomes were not measured in any of the included studies or reviews. Effectiveness of rapid reviews in increasing the use of research evidence in policy decision-making is also an important area for further enquiry.

Conclusions

Intendance needs to be taken in interpreting the results of this rapid review on the all-time methodologies for rapid review given the limited state of the literature. There is a broad range of methods currently used for rapid reviews and wide range of products available. However, greater care needs to be taken in improving the transparency of the methods used in rapid review products to enable better assay of the implications of methodological 'shortcuts' taken for both rapid reviews and systematic reviews. This requires the input of policymakers and practitioners, as well as researchers. There is no evidence bachelor to suggest that rapid reviews should not be done or that they are misleading in any manner.

Abbreviations

- AMSTAR:

-

A MeaSurement Tool to Assess Reviews

- PAHO:

-

Pan American Wellness Organization

- PRISMA:

-

Preferred reporting items for systematic reviews and meta-assay

- RCT:

-

Randomised controlled trial

References

-

World Wellness Associates. Resolution on Wellness Research. 2005. http://world wide web.who.int/rpc/meetings/58th_WHA_resolution.pdf. Accessed 17 Nov 2016.

-

World Health Organization. Bridging the "Know–Do" Gap: Meeting on Knowledge Translation in Global Health, 10–12 October 2005. 2006 Contract No. WHO/EIP/KMS/2006.two. Geneva: WHO; 2006.

-

Lavis J, Davies H, Oxman A, Denis JL, Aureate-Biddle Yard, Ferlie E. Towards systematic reviews that inform health care management and policy-making. J Health Serv Res Policy. 2005;10 Suppl 1:35–48.

-

Liverani M, Hawkins B, Parkhurst JO. Political and institutional influences on the apply of evidence in public health policy. A systematic review. PLoS Ane. 2013;8:e77404.

-

Moore Chiliad, Redman S, Haines A, Todd A. What works to increase the apply of research in population health policy and programmes: a review. Evid Policy A J Res Debate Pract. 2011;7:277–305.

-

Nutley S. Bridging the policy/research divide. Reflections and lessons from the Great britain. Keynote paper presented at "Facing the time to come: Engaging stakeholders and citizens in developing public policy". Canberra: National Institute of Governance Briefing; 2003. http://www.treasury.govt.nz/publications/media-speeches/guestlectures/nutley-apr03. Accessed 17 Nov 2016.

-

Oliver K, Innvar S, Lorenc T, Woodman J, Thomas J. A systematic review of barriers to and facilitators of the utilize of evidence by policymakers. BMC Wellness Serv Res. 2014;14:2.

-

Orton L, Lloyd-Williams F, Taylor-Robinson D, O'Flaherty G, Capewell Due south. The utilize of research bear witness in public health decision making processes: systematic review. PLoS ONE. 2011;6:e21704.

-

Globe Health Organization. Knowledge Translation Framework for Ageing and Health. Geneva: Department of Ageing and Life-Class, WHO; 2012.

-

Lavis JN, Hammill Air conditioning, Gildiner A, McDonagh RJ, Wilson MG, Ross SE, et al. A systematic review of the factors that influence the use of research evidence by public policymakers. Concluding study submitted to the Canadian Population Health Initiative. Hamilton: McMaster University Program in Policy Decision-Making; 2005.

-

Chalmers I. If show-informed policy works in practice, does it matter if information technology doesn't work in theory? Evid Policy A J Res Debate Pract. 2005;1:227–42.

-

Lavis JN, Permanand G, Oxman AD, Lewin S, Fretheim A. Back up Tools for evidence-informed health Policymaking (STP) thirteen: Preparing and using policy briefs to support prove-informed policymaking. Health Res Pol Syst. 2009;7 Suppl 1:S13.

-

Stephens JM, Handke B, Doshi JA, On behalf of the HTA Principles Working Group - part of the International Order for Pharmacoeconomics and Outcomes Research (ISPOR) HTA Special Interest Group (SIG). International survey of methods used in health technology assessment (HTA): does practice meet the principles proposed for adept enquiry? Comp Effectivness Res. 2012;2:29–44.

-

World Health Organization. WHO Handbook for Guideline Development. 2nd ed. Geneva: WHO; 2014.

-

Khangura S, Konnyu K, Cushman R, Grimshaw J, Moher D. Testify summaries: the development of a rapid review arroyo. Syst Rev. 2012;1:10.

-

Khangura S, Polisena J, Clifford TJ, Farrah Grand, Kamel C. Rapid review: An emerging approach to evidence synthesis in health applied science assessment. Int J Technol Assess Wellness Care. 2014;thirty:20–seven.

-

Polisena J, Garrity C, Kamel C, Stevens A, Abou-Setta AM. Rapid review programs to support health care and policy conclusion making: a descriptive analysis of processes and methods. Syst Rev. 2015;4:26.

-

Ganann R, Ciliska D, Thomas H. Expediting systematic reviews: methods and implications of rapid reviews. Implement Sci. 2010;5:56.

-

Abrami PC, Borokhovski E, Bernard RM, Wade CA, Tamim R, Persson T, et al. Issues in conducting and disseminating brief reviews of evidence. Evid Policy A J Res Debate Pract. 2010;half dozen:371–89.

-

Gough D, Thomas J, Oliver S. Clarifying differences between review designs and methods. Syst Rev. 2012;1:28.

-

Harker J, Kleijnen J. What is a rapid review? A methodological exploration of rapid reviews in Health Engineering science Assessments. Int J Evid Based Healthc. 2012;x:397–410.

-

Wilson MG, Lavis JN, Gauvin FP. Developing a rapid-response plan for health system determination-makers in Canada: findings from an issue brief and stakeholder dialogue. Syst Rev. 2015;4:25.

-

Tricco AC, Antony J, Zarin W, Strifler 50, Ghassemi Yard, Ivory J, et al. A scoping review of rapid review methods. BMC Med. 2015;13:224.

-

Haby MM, Chapman E, Clark R, Barreto J, Reveiz L, Lavis JN. Designing a rapid response program to support testify-informed decision making in the Americas Region: using the all-time available bear witness and example studies. Implement Sci. 2016;11:117.

-

Moher D, Liberati A, Tetzlaff J, Altman DG, Group P. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. PLoS Med. 2009;6:e1000097.

-

Haby M, Chapman East, Reveiz 50, Barreto J, Clark R. Methodologies for rapid response for evidence-informed decision making in health policy and practice: an overview of systematic reviews and chief studies (Protocol). PROSPERO: CRD42015015998. 2015. http://world wide web.crd.york.air-conditioning.uk/PROSPEROFILES/15998_PROTOCOL_20150016.pdf.

-

World Wellness Organization. Report from the Ministerial Pinnacle on Wellness Inquiry: Identify Challenges, Inform Actions, Correct Inequities. Mexico Urban center: WHO; 2005. http://www.who.int/rpc/top. Accessed 17 Nov 2016.

-

Shea BJ, Grimshaw JM, Wells GA, Boers M, Andersson N, Hamel C, et al. Development of AMSTAR: a measurement tool to assess the methodological quality of systematic reviews. BMC Med Res Methodol. 2007;7:10.

-

Higgins JPT, Light-green Due south, editors. Cochrane Handbook for Systematic Reviews of Interventions Version v.1.0 [updated March 2011]. The Cochrane Collaboration; 2011. http://handbook.cochrane.org/. Accessed 17 Nov 2016.

-

Cameron A, Watt A, Lathlean T, Sturm L. Rapid versus full systematic reviews: an inventory of electric current methods and do in Health Engineering Assessment. ASERNIP-Southward Study No. 60. Adelaide: ASERNIP-Due south, Imperial Australasian College of Surgeons; 2007.

-

Featherstone RM, Dryden DM, Foisy M, Guise JM, Mitchell Doc, Paynter RA, et al. Advancing knowledge of rapid reviews: an analysis of results, conclusions and recommendations from published review articles examining rapid reviews. Syst Rev. 2015;4:50.

-

Hartling Fifty, Guise JM, Kato East, Anderson J, Aronson Northward, Belinson S, et al. EPC Methods: An Exploration of Methods and Context for the Production of Rapid Reviews. Enquiry White Paper. Prepared past the Scientific Resource Middle nether Contract No. 290-2012-00004-C. AHRQ Publication No. xv-EHC008-EF. Rockville, MD: Agency for Healthcare Research and Quality; 2015.

-

Watt A, Cameron A, Sturm L, Lathlean T, Babidge West, Blamey S, et al. Rapid reviews versus total systematic reviews: an inventory of current methods and practise in health technology assessment. Int J Technol Assess Wellness Care. 2008;24:133–9.

-

Opiyo N, Shepperd S, Musila North, Allen Due east, Nyamai R, Fretheim A, et al. Comparison of alternative evidence summary and presentation formats in clinical guideline development: a mixed-method study. PLoS Ane. 2013;8:e55067.

-

Van de Velde S, De Cadet E, Dieltjens T, Aertgeerts B. Medicinal employ of potato-derived products: conclusions of a rapid versus full systematic review. Phytother Res. 2011;25:787–8.

-

Watt A, Cameron A, Sturm L, Lathlean T, Babidge Westward, Blamey Southward, et al. Rapid versus full systematic reviews: validity in clinical do? Aust North Z J Surg. 2008;78:1037–40.

-

Tricco AC, Zarin W, Antony J, Hutton B, Moher D, Sherifali D, et al. An international survey and modified Delphi approach revealed numerous rapid review methods. J Clin Epidemiol. 2015;70:61–7.

-

Shea BJ, Hamel C, Wells GA, Bouter LM, Kristjansson Eastward, Grimshaw J, et al. AMSTAR is a reliable and valid measurement tool to appraise the methodological quality of systematic reviews. J Clin Epidemiol. 2009;62:1013–20.

-

Song F, Parekh S, Hooper L, Loke YK, Ryder J. Dissemination and publication of research findings: an updated review of related biases. Health Technol Assess. 2010;14(eight):iii. nine–xi, 1–193.

Acknowledgements

We thank the authors of included studies and other experts in the field who responded to our request to identify further studies that could come across our inclusion criteria and/or responded to our queries regarding their study.

Funding

This work was adult and funded under the cooperation agreement # 47 between the Section of Science and Technology of the Ministry of Health of Brazil and the Pan American Wellness Arrangement. The funders of this report set the terms of reference for the project simply, autonomously from the input of JB, EC and LR to the carry of the study, did not significantly influence the work. Manuscript preparation was funded past the Ministry of Health Brazil, through an EVIPNet Brazil project with the Bireme/PAHO.

Availability of information and materials

The datasets supporting the conclusions of this article are included within the article and its boosted files.

Authors' contributions

EC and JB had the original thought for the review and obtained funding; MH and EC wrote the protocol with input from RC, JB, and LR; MH and RC undertook the article pick, data extraction and quality assessment; MH undertook data synthesis and drafted the manuscript; JL, EC, RC, JB and LR provided guidance throughout the choice, data extraction and synthesis phase of the review; all authors provided commentary on and approved the final manuscript.

Competing interests

The writer(s) declare that they take no competing interests. Neither the Ministry of Health of Brazil nor the Pan American Wellness Organisation (PAHO), the funders of this research, have a vested involvement in any of the interventions included in this review – though they do have a professional interest in increasing the uptake of research evidence in decision making. EC and LR are employees of PAHO and JB was an employee of the Ministry building of Wellness of Brazil at the fourth dimension of the report. However, the views and opinions expressed herein are those of the review authors and practise not necessarily reflect the views of the Ministry of Health of Brazil or PAHO. JNL is involved in a rapid response service simply was not involved in the selection or data extraction phases. MH, as part of her previous employment with an Australian land authorities department of wellness, was responsible for commissioning and using rapid reviews to inform decision making.

Consent for publication

Not applicable.

Ethics approval and consent to participate

Non applicable.

Author information

Affiliations

Corresponding writer

Boosted files

Rights and permissions

Open Access This article is distributed nether the terms of the Artistic Eatables Attribution four.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in whatsoever medium, provided you lot give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were fabricated. The Creative Eatables Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data fabricated available in this article, unless otherwise stated.

Reprints and Permissions

About this article

Cite this commodity

Haby, K.Thousand., Chapman, E., Clark, R. et al. What are the best methodologies for rapid reviews of the research testify for evidence-informed decision making in health policy and practice: a rapid review. Wellness Res Policy Sys fourteen, 83 (2016). https://doi.org/ten.1186/s12961-016-0155-7

-

Received:

-

Accepted:

-

Published:

-

DOI : https://doi.org/10.1186/s12961-016-0155-7

Keywords

- Rapid reviews

- Knowledge translation

- Bear witness-informed decision-making

- Inquiry uptake

- Wellness policy

Source: https://health-policy-systems.biomedcentral.com/articles/10.1186/s12961-016-0155-7

0 Response to "Both Literature Reviews and Systematic Reviews Are Types of Research Evidence Reviews"

Post a Comment